Happy Horse 1.0.

Now on Pexo.

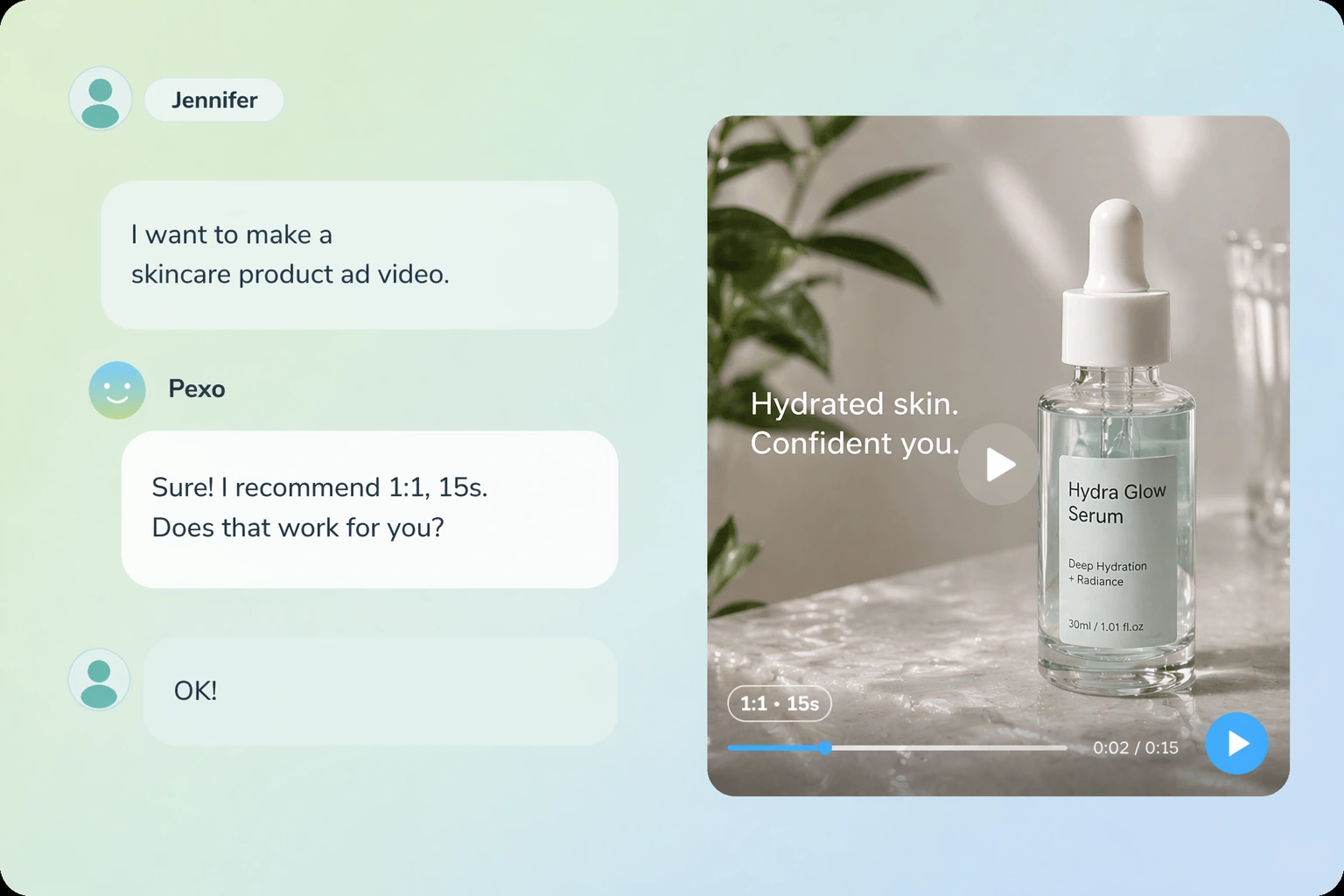

On Pexo, you describe what you want in plain language, Pexo routes your request to Happy Horse 1.0, and your finished 1080p video with native audio is returned, with no model configuration and no prompt engineering required.

Happy Horse 1.0 Video Samples: 1080p With Native Audio

Every video shown here was produced through Happy Horse 1.0 on Pexo using a plain-language description, with no prompt syntax or model configuration. This is the default output quality any user receives from their very first generation.

What Makes Happy Horse 1.0 Technically Different: Full Capability Breakdown

Nothing needs to be selected or configured manually; you describe your intent and Pexo applies the right features automatically.

Video and Audio Generated Together in One Forward Pass

Happy Horse 1.0's 40-layer unified Transformer puts video frames and audio tokens into the same sequence, so dialogue, ambient sound, and Foley effects are generated alongside the video rather than added in a separate step afterward. The video you receive is already complete.

Native Lip-Sync Across Seven Languages With Ultra-Low Error Rate

Happy Horse 1.0 supports native lip synchronization in various languages, handled directly by the model without a third-party lip-sync layer. Self-reported word error rate data places Happy Horse 1.0 at 14.60% WER, compared to 19.23% for LTX 2.3 and 40.45% for OVI 1.1 in the same evaluation framework. For multilingual creators, this means language-accurate synchronization is available natively across seven languages from a single model.

Turn a Description Into a Ranked-Number-One Text-to-Video Output

Happy Horse 1.0 holds an Elo score of 1333 in the Artificial Analysis text-to-video category. In an Elo system, it is a meaningful and consistent advantage rather than statistical noise. Through Pexo, you describe your scene, motion, lighting, and camera angle in natural language and Pexo routes the request to Happy Horse 1.0 without requiring any prompt syntax. The model does the rest.

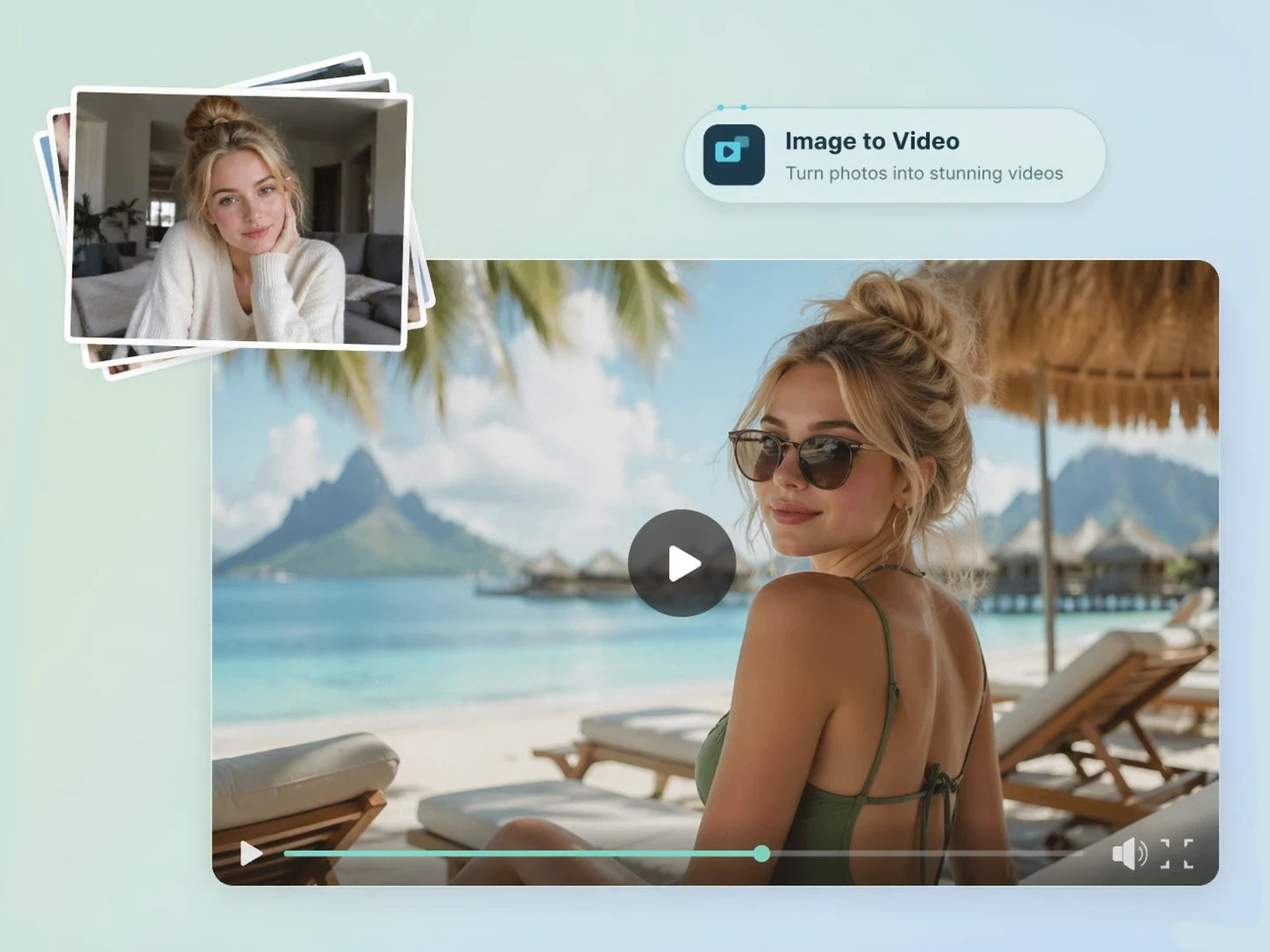

The Top-Ranked Image-to-Video Engine: Elo 1392, Blind-Tested

Happy Horse 1.0's visual fidelity and motion consistency are most pronounced under blind testing conditions. Through Pexo, the workflow is straightforward: upload a reference image, describe the motion or atmosphere you want, and Pexo handles every generation decision from that point forward. No technical understanding of the model's unified pipeline is required.

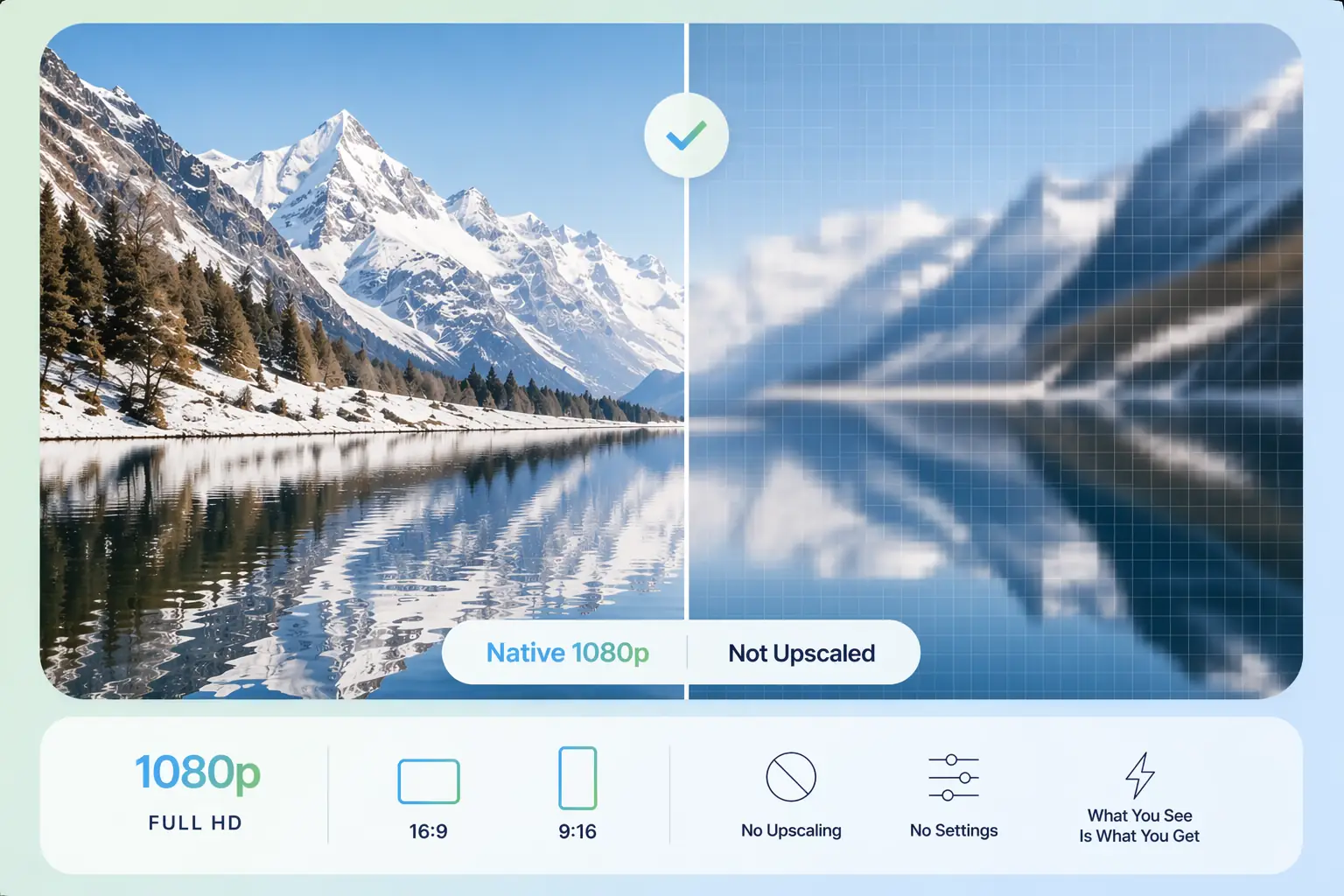

Native 1080p Resolution: Not Upscaled, Not a Separate Step

Happy Horse 1.0 generates clips of 5 to 8 seconds at native 1080p resolution in standard aspect ratios including 16:9 and 9:16, with no post-hoc upscaling applied to reach that resolution. Through Pexo, output quality is automatic and consistent across every generation.

38-Second 1080p Generation on H100: Speed Without Quality Tradeoff

Happy Horse 1.0 achieves approximately 38 seconds for a 1080p clip on a single NVIDIA H100 GPU, a speed made possible by 8-step DMD-2 distillation without classifier-free guidance, which reduces the number of denoising steps required without introducing visual quality penalties. With Happy Horse 1.0 on Pexo, you get output that is both fast and high-quality.

Happy Horse 1.0 vs. Seedance 2.0, Kling 3.0, and Sora 2: Benchmark Rank, Native Audio, and Lip-Sync Compared

This is a capability-level comparison anchored to third-party Artificial Analysis benchmark data where available. Several dimensions below represent features that competing models do not offer rather than areas of relative performance.

| Feature | Happy Horse 1.0 | Seedance 2.0 | Kling 3.0 | Sora 2 |

|---|---|---|---|---|

| Artificial Analysis T2V rank | #1 (Elo 1333) | #2 (~Elo 1273) | Top 5 | Top 5 |

| Artificial Analysis I2V rank | #1 (Elo 1392) | #2 (~Elo 1344) | Top 5 | — |

| Audio generation method | Joint single-pass | Post-processing | Post-processing | No native audio |

| Native lip-sync language support | 7 languages | — | — | — |

| Output resolution (native) | 1080p | 1080p | 1080p | 1080p |

| Generation speed benchmark | ~38s on H100 | — | — | — |

| Model architecture | 15B unified Transformer, 40-layer | — | — | Diffusion Transformer |

| Text-to-video input support | ✓ | ✓ | ✓ | ✓ |

| Image-to-video input support | ✓ | ✓ | ✓ | — |

| Access via Pexo | ✓ | ✓ | ✓ | ✓ |

Sources: Happy Horse 1.0 — Artificial Analysis Video Arena · Happy Horse 1.0 — fal.ai · Seedance 2.0 — ByteDance · Kling 3.0 — Kuaishou Technology · Sora 2 — OpenAI

How to Run Happy Horse 1.0 on Pexo: No Setup, Three Steps

No model knowledge, prompt engineering, or API configuration is required at any point in this process. Pexo owns every production decision; your only responsibility is communicating what you want to create.

Describe Your Idea or Upload an Image

Type a description in plain language or upload a reference image, whichever matches your creative intent. Happy Horse 1.0 accepts both text and image inputs and Pexo reads your intent without requiring any specific format or structure.

Pexo Runs Joint Audio-Video Generation

Pexo routes your request to Happy Horse 1.0 and triggers its single-pass unified generation, producing video frames and audio together in one forward pass. You configure nothing; the model handles all parameters automatically.

Download High-Resolution Video With Native Audio

Your output is a complete, publish-ready 1080p video with synchronized native audio, not a draft requiring post-processing in another tool. This is the end of the separate-audio workflow that other video platforms impose.

What People Build With Happy Horse 1.0 on Pexo

Happy Horse 1.0 covers a wide range of creative and commercial video use cases, from cinematic short-form content to multilingual branded video with synchronized audio. Whatever you are building, you describe it and Pexo delivers it.

Product Ad Video

Sell products with stunning video ads

Social Media

Post scroll-stopping videos in minutes

Explainer Video

Simplify complex ideas with clear visuals

Short Story

Tell compelling stories in short form

Anime Video

Generate stunning anime art & videos with AI

Music Video

Turn any song into a beat-synced video

Questions About Happy Horse 1.0 on Pexo

What is Happy Horse 1.0 and who developed it?+

Happy Horse 1.0 is a 15-billion-parameter AI video generation model built around a unified 40-layer self-attention Transformer that produces video and synchronized audio together in a single forward pass. It was developed by a team formerly operating under Alibaba's Taotian Group Future Life Laboratory, led by Zhang Di, the former Vice President of Kuaishou and the technical architect behind Kling AI.

How does Happy Horse 1.0 generate audio without a separate processing step?+

Happy Horse 1.0 puts video frame tokens and audio tokens into the same sequence within its unified Transformer, so the model generates dialogue, ambient sound, and Foley effects alongside the video frames rather than producing them independently and aligning them afterward. Because audio is part of the same forward pass as video, synchronization is a structural property of the output rather than a correction applied after generation.

Why is Happy Horse 1.0 ranked number one on Artificial Analysis?+

The Artificial Analysis Video Arena uses an Elo rating system built on blind human preference votes, where users compare two videos generated from the same prompt without knowing which model produced either one. Happy Horse 1.0 reached Elo 1333 in text-to-video and Elo 1392 in image-to-video, leading the benchmark by roughly 60 and 48 points respectively over the second-ranked model in each category.

Which languages does Happy Horse 1.0 support for lip-sync?+

Happy Horse 1.0 supports native lip synchronization in seven languages: Mandarin, Cantonese, English, Japanese, Korean, German, and French. Synchronization is handled natively by the model itself, with no third-party lip-sync tool required.

How does Happy Horse 1.0 compare to Kling 3.0 and Sora 2 in blind testing?+

In Artificial Analysis blind testing, Happy Horse 1.0 ranks above both Kling 3.0 and Sora 2 in the text-to-video category, with its Elo 1333 score representing a consistent preference advantage under conditions where voters cannot identify which model produced the output. Happy Horse 1.0 also offers native joint audio-video generation and seven-language lip-sync, capabilities neither Kling 3.0 nor Sora 2 provide natively.

Can I generate a video from an image using Happy Horse 1.0?+

Yes. Happy Horse 1.0 supports image-to-video generation through the same unified model used for text-to-video, with an Elo score of 1392 in the Artificial Analysis image-to-video category. Through Pexo, you upload a reference image, describe the motion or atmosphere you want, and the model animates it forward.

How do I access Happy Horse 1.0 without building an API integration?+

Through Pexo: no API key, no API integration, and no technical configuration of any kind is required. You describe what you want in plain language and Pexo handles model selection, generation, and delivery on your behalf.

Is Happy Horse 1.0 free to use on Pexo?+

Pexo offers a free plan that includes access to Happy Horse 1.0 alongside other leading AI models. Check the Pexo pricing page for current plan details and generation limits.

Happy Horse 1.0 Is on Pexo: Describe It and It Generates

The number one ranked video model takes one sentence to use.